Code that predates the current team

Code that hasn't been touched in living memory

Code you're afraid to touch

Code that does not have an automated test suite

Faster development; faster time to market

More robust, error free code

YAGNI: Writing tests first avoids writing unneeded code.

Easier to find and fix bugs

Easier to add features or change behavior; less worry about unintentionally introducing bugs

Makes refactoring/optimization possible: any change that doesn't break a test suite is de facto acceptable.

How many of you have seen a comment like this?

/* I have no idea how this works but it seems to. Whatever you

do, don't touch this function, and don't break this code! */

Entire systems are sometimes ruled off-limits because no one understands them; yet these systems still need to be maintained.

Newly discovered bugs

Ports to new hardware and operating systems:

Vista

X86 Mac OS X (requires port to new IDE as well)

Java 6

Changing external environment:

Y2K

Euro

Sarbanes-Oxley

etc.

Adding new features to legacy code?

Debugging legacy code?

Optimizing legacy code?

Refactoring legacy code?

Porting legacy code?

Other maintenance tasks?

You will not have perfect test coverage

But this is not a binary decision.

Some tests are better than none. More tests are better than fewer.

Presumably the application is already working; at least mostly.

A broad test that covers a large swath of the application will help you notice if any of your changes break something.

Since you have finite time to spend writing tests, it is more important to get broad coverage than targeted coverage.

Of course, make sure anything you're changing is covered.

And anything you're adding can be done test-first

Usually TDD writes just enough test code to make the tests fail; then writes model code only until all tests pass.

When working with legacy code, the model code is mostly already written.

Not uncommon to write a lot of tests before switching back to model code.

Actually we only give this one up in one direction; when adding new features we can use more traditional test driven approaches

Some important aspects of test driven development can apply to legacy testing:

The tests are completely automated. One-button or even no-button testing.

Test failures are blindingly obvious. Manual scanning of the results is not necessary.

Test suite runs quickly enough to run before every check-in.

Most existing XUnit test libraries work well:

JUnit

NUnit

CppUnit

PyUnit

etc.

A few frameworks may work better:

TestNG

Fit

FitNesse

Write one test.

It almost doesn't matter what it tests.

Make it as broad as possible

Automate it.

This is a smoke test.

The main() method is sometimes a good place to start

It's where the application expects to start

It can reach into almost any part of the code

Doesn't always work; many main() methods are not designed to be called more than once or from another main() method

After the first test is written, pull out any initialization and cleanup code into fixtures.

Then think of as many other tests you can quickly and easily write based on these fixtures.

Functional? Acceptance? System Integration?

Standard conformance test suites (e.g. ANSI SQL, W3C XML)

Manual test scripts

Can you turn these into unit tests?

Either automatically or manually?

May need to mock out parts

Probably need to subset the suite

Maybe able to write one JUnit test that runs an entire existing suite

Later, break it into smaller units

Supply an initial bad expectation to a test to make sure it fails

Use the actual value returned as the expectation for future runs of the test

Fastest gains in code coverage come by starting your tests at the highest level.

Look at what the application is doing, and write a test for each separate task.

For instance, in a human resources application you might write one test each for:

New hire

Fire employee

Generate payroll

Schedule vacation

Raise salary

Change address

etc.

Notice that none of these have anything to do with how the code is structured. They don't even care what language the program is written in.

Initially, focus on the main path of the application; not the edge conditions

As the test suite expands, then you can start looking at the edge cases

Even if you suspect or have diagnosed particular bugs, you still need tests for the main focus of the application.

Remember: one of the main goals of this approach is to avoid accidentally breaking something when fixing a bug somewhere else.

Not as important as testing by function

A different way of organizing your thinking about the tests will dissect the application differently, cover different code, and reveal different things

Dissecting the human head: the discovery of the Sphenomandibularis muscle; ; New muscle of mastication found by using different pattern of cuts 1

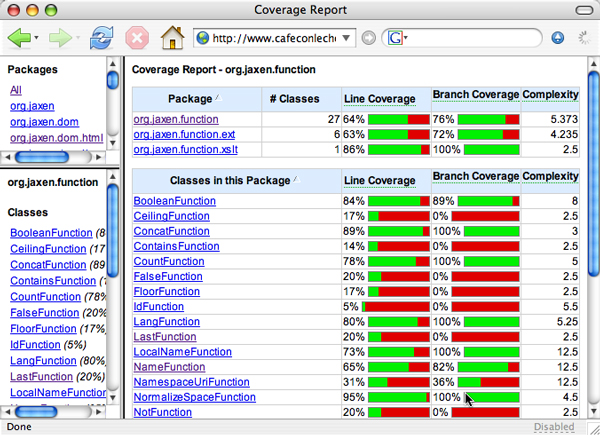

One test per package/module

org.jaxen.dom.html

One test per class

One test per method

One test per line

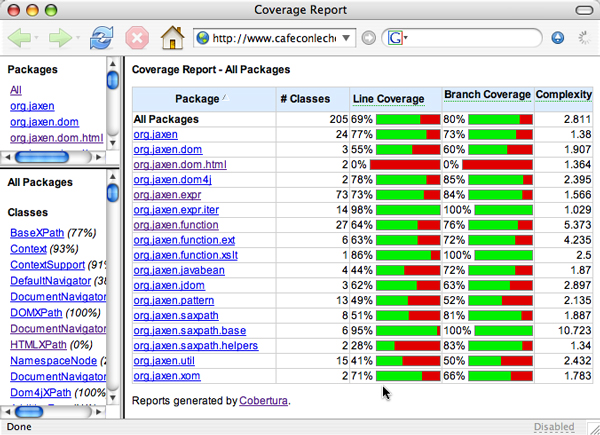

How well are you doing?

More importantly, what have you missed?

Clover

Cobertura

Not Jester

Cenqua Clover $250-$2500 payware http://www.cenqua.com/clover/

Cobertura (GPL): http://cobertura.sourceforge.net/

Coverlipse Eclipse plug-in: http://coverlipse.sourceforge.net/index.php

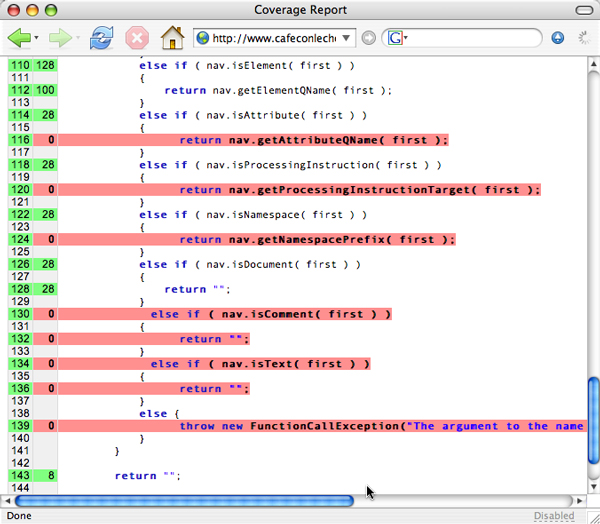

Tool instruments byte code with extra code to measure which statements are and are not reached.

Test suite is run. Data is collected.

Data is analyzed to generate reports (often in HTML) showing line by line what is and is not reached.

Any line that isn't reached isn't being tested.

I'm going to use JUnit 3.8 in the examples, but this approach is normally test framework agnostic.

Does covered == tested? A necessary but not sufficient condition.

http://www.cafeconleche.org/cobertura-jaxen/

Can use reflection to create one test per method like this:

public void testMethodName() {

fail("Test Code Not Written Yet");

}Or maybe like this:

public void testMethodName() {

// TODO fill in test code

}Payware automatic test writers aren't too useful; don't really go beyond what static analysis already tells you

Don't neglect static analysis though. It will find real bugs you haven't noticed.

FindBugs

PMD

lint4j

gcc -Wall

Agitar

etc.

You will find bugs.

Normally TDD stops testing and starts fixing when a bug is first noticed by the tests.

But this assumes that you have tests for everything else in the model and can be fairly confident that you'll find out immediately if your fix breaks some other piece of the system.

Three questions

Does the bug seem simple, obvious, and local?

Do you understand the code where the bug appears?

Do you understand the fix?

If the answer to all three questions is yes, go ahead and fix the bug.

If the answer is no, then you should probably expand the test suite first before fixing the code.

Much legacy code is not designed with testability in mind

Refactoring can improve it so it's more testable

Catch-22: Refactoring with confidence requires a good test suite

All I can say is be careful.

Most automated refactoring tools for Java at least are fairly reliable.

Some tests are better than none

Broader tests work better for legacy code than narrow, unit tests

Don't let the perfect be the enemy of the good.

This presentation: http://www.cafeaulait.org/slides/ad2007/legacy/

Testing legacy code: http://www-128.ibm.com/developerworks/java/library/j-legacytest.html

Working Effectively with Legacy Code

Working Effectively with Legacy Code

Michael Feathers

Prentice Hall, 2004

ISBN 0-131-17705-2

Cobertura: http://cobertura.sourceforge.net/

Measure test coverage with Cobertura: http://www.ibm.com/developerworks/java/library/j-cobertura/

Clover: http://www.cenqua.com/clover/

JUnitDoclet: http://www.junitdoclet.org/